Journal of Creation 27(3):64–71, December 2013

Browse our latest digital issue Subscribe

Explaining robust humans

This paper attempts to explain the ‘robusticity’ of the fossil remains of ‘robust’ humans. A connection with long lifespans is suggested. Changes in development, linked to longevity, most likely involving thyroid hormone secretion patterns, are proposed as the primary mechanism causing robusticity. That is, a different amount of thyroid hormone available for skeletal growth at particular stages of development in robust humans compared to extant humans. The nature of the ‘hobbit’ (Homo floresiensis) is revisited, in order to establish clues as to the small size of some Homo erectus crania.

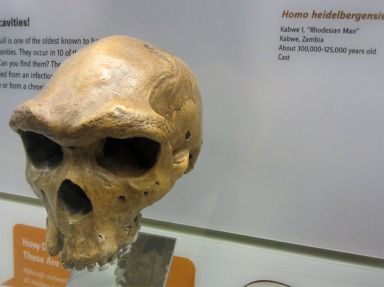

One of the interesting questions in human origins, from a creation position, is as follows. Why were robust humans, such as Neandertals, Homo heidelbergensis and Homo erectus, who were fully human (i.e. descendants of Adam and Eve), generally more ‘robust’ in morphology, with a heavily built or large body, or body part, compared with the more ‘gracile’ build of extant humans, with their more delicate and slender build with weak muscle attachments or bony buttresses? When talking about the robust morphology of fossil specimens, such as the Neandertals and H. erectus, it is often the skull that is referred to, but the postcranial bones also exhibit robusticity.

Differences between skulls of robust humans

The first question to tackle is why there are differences between robust skulls, such as those of Neandertals and H. erectus. As indicated by the late evolutionary anthropologist Harry Shapiro, who was Chairman Emeritus of the Department of Anthropology at the American Museum of Natural History, some of the differences may have just been size-related:

“But when one examines a classic Neanderthal skull, of which there are now a large number, one cannot escape the conviction that its fundamental anatomical formation is an enlarged and developed version of the Homo erectus skull. As in Homo erectus, it has the bun-shaped protrusion in the occiput, the heavy brow ridge, the relatively flattened crown that from the rear presents a profile like a gambrel roof. Its greatest breadth is low, just above the ears, and the absence of a jutting chin is typical.”1

More recently paleoanthropologist Daniel Lieberman, an expert on the supposed ‘evolution’ of the human head, comments that:

“H. heidelbergensis and H. Neanderthalensis crania are generally H. erectus-like but with a slight increase in face size and relative brain size. In contrast, H. sapiens represents a shift in craniofacial architecture, with a retracted, smaller face, and a more spherical vault.”2

Hence, while there are alleged differences between the main groups of robust humans, it appears the bigger difference is between extant humans and robust humans. Consider the ‘artificial’ species H. heidelbergensis (figure 1). When viewing casts of these fossil skulls it is not obvious what exactly differentiates them from H. erectus, apart from the larger size of some of them. Are we really dealing with different species, or just different-sized specimens of the same species; with perhaps some of them subjected to different environmental influences?

Seemingly bucking the trend of H. erectus being small-brained is the Jinniushan skull from China, dated by evolutionists to about 200,000 years ago. It has a cranial capacity of 1,390 cc (cubic centimetres) and is tentatively assigned to late H. erectus by some.3 It seems the specimen could easily be assigned to H. heidelbergensis, particularly as it is said to have a ‘primitive–advanced mix’ of features,3 and indeed it has been grouped with H. heidelbergensis by others.4 However, to some evolutionary experts an inclusion of the Jinniushan skull (and certain other Chinese fossil skulls) in H. heidelbergensis clashes with other ideas about human origins, and so such an assignment is rejected; not because of morphology per se, but by factors related to alleged age, location and ideas about brain expansion.5 Interestingly, postcranial remains from the Jinniushan specimen have been suggested as resembling those of Neandertals.6 The seemingly erectus–heidelbergensis interchangeability of the Jinniushan skull, as convenience dictates, does nothing to dispel the notion that H. erectus, H. heidelbergensis, and the Neandertals belong to the same species. Sure, there may be regional variations in form, as there is with people groups today, and it also may be that skulls that are particularly small may scale down disproportionally in some features. However, such differences may be caused by factors that have nothing to do with human evolution as normally understood.7

Consider also that, in the same way that the Babel dispersion may have led to different groups of people taking different subsets of genes for certain traits (for example, blood types), the dispersion may also have led to different groups taking different subsets of genes for variation in bony characters. Hence, as the people groups migrated away from Babel, variants of robust humans, such as H. erectus, H. heidelbergensis and Neandertals, arose through a combination of genetic variation in bony characters and local environmental factors, in a similar way that other ‘racial’ characteristics of people groups became established. As time went by some traits became dominant in some people groups (possibly through natural selection, but more likely through genetic drift due to inbreeding), but were absent, or close to absent, in others. The presence or absence of a chin may be one such bony character state that is under genetic control. Most robust humans tend to have an undeveloped or absent chin, indicating this trait was much more frequent in earlier human populations. There are still extant people without a well-defined chin,8 indicating that this is a part of normal human variability. However, the trait has certainly decreased in today’s population.

Longevity of early humans

It is reasonable to assume that most of these robust humans lived during the first few centuries immediately after the Flood, given their general geological association with post-Flood deposits.9 So that raises the question of why early post-Flood humans appear to have been more robust? In the model proposed here it is suggested that the morphology of these early post-Flood robust humans reflects that of their immediate pre-Flood ancestors—and that the answer to the question about robusticity lies with the longevity of earlier humans.

A factor that cannot be ignored in a creationist model of human origins is the biblical long lifespans of individuals in the pre-Flood world, and also the early post-Flood earth, as derived from Old Testament records. In the pre-Flood world lifespans of around 900 years appear to have been common, and even individuals born early post-Flood (within a few hundred years of the Flood having occurred) are recorded as having lived for hundreds of years—much greater than the 70 or so years that individuals live today, although a relatively few individuals may live over 100 years.10

Longevity and robusticity

So how can long lifespans be related to robusticity? If initially, after the ‘fall of man’, humans were designed to live for hundreds of years, then this would most likely have a bearing on development processes and timings. Simply put, longevity would probably be associated with changes in development,11 not just the aging process. From a design point of view, to last longer it may be that we originally had to be built stronger—and as such, this would have to be incorporated into development early on.

Having thickened cranial vault bones, a heavily built face, thick-boned jaws, and thick postcranial bones may have been necessary for the body to cope with these long lifespans. Consider also the current rate of bone mass loss in extant humans with normal aging, beginning between ages 30 and 40, where “women lose about 8 percent of their skeletal mass every decade”, compared to 3 percent per decade deterioration for men.12 Such a rate seems untenable for lifespans of hundreds of years. In this regard alone something must have been different in earlier humans with long lifespans.

Neandertals and Jack Cuozzo

Creationist Jack Cuozzo, author of the book on Neandertals, Buried Alive,13 has long suggested that the Neandertals were post-Flood people with extended longevity. Cuozzo’s position is that the distinctive morphological characteristics of Neandertals were the result of living to a very old age (hundreds of years).14 For example, he is said to have attributed the Neandertal browridges to having been “formed merely from old age and normal chewing”.15 He believes that Neandertal children displayed a slow rate of maturation compared to present children, and hence took longer to reach adulthood.16

In the model presented here it is suggested that changes in growth rates and timing of developmental processes between robust children and extant children are responsible for differences in robusticity between the adults of each group; and that these are linked to the genetics of longevity. Whether Neandertal children displayed a slow rate of maturation17—that is, took a longer period of development to attain maturity18 or complete their growth—may or may not be so. But a longer maturation period would not necessarily follow from changes in developmental processes, nor is it necessarily a concomitant to longevity.

As distinct from Cuozzo, I believe that the key features of the Neandertal morphology arose (influenced to varying degrees by environmental factors) during the developmental process, and not during the aging process. If it were due to the aging process, every Neandertal fossil discovered must, by default, have lived hundreds of years, which is unlikely; especially when some of the bony characteristics that distinguish these robust humans from moderns are already present in Neandertal specimens that are obviously still a long way from attaining adulthood, however long that may have taken.

However, if the characteristic Neandertal features were chiefly the result of development processes (genetically linked to greater longevity factors), then some of the Neandertal fossils could be of individuals with the potential to live hundreds of years, and as such built robustly, but who died at a relatively young age. Such a proposal would also account for the presence of these features in Neandertal children.

It should also be pointed out that in Cuozzo’s view, specimens attributed to H. erectus are regarded as apes.19,20 This is at odds with my position that they were fully human.

Note that robust humans did not necessarily live longer, as in the case of Neandertals; many of them appear to have died early, often from injury, but not usually from causes associated with old age. According to paleoanthropologist and Neandertal expert Chris Stringer, “the Neanderthals suffered many bodily injuries”, and dying at the age of about forty was “a very respectable age for a Neanderthal”.21 Stringer also comments that:

“… Caspari’s study of the seventy-five or so Neanderthals from the site of Krapina in Croatia showed no individuals were likely to have been older than thirty-five at death, so there were not many grandparents around … .”22

How accurate the above age estimates are is hard to say. However, regardless of how long individual adult Neandertals lived, they would still have been built robustly during development if they were genetically programmed to live longer. And this is the important part, as it is in development that most of the distinctive features of Neandertal cranial morphology become present,23,24,25 not during the aging process. According to evolutionist Paul Jordan:

“The Neanderthal children are interesting because they demonstrate that many of the noticeable physical distinctions of their people, like the heavy brow arches and the general robusticity of their bodies, put in an early appearance in the life of each and every Neanderthaler.”26

Thyroid hormone

If longevity was linked to development processes associated with robusticity, then robust features would be expected to disappear with shorter lifespans. The genetic mechanism for robusticity likely involves the control of one or several hormones involved in bone growth and maintenance,27 such as, for example, differences in the regulation of the pattern of thyroid hormone secretion between extant humans and robust humans. The thyroid gland produces thyroid hormone, as well as the hormone calcitonin. Thyroid hormone is actually two iodine containing hormones, thyroxine (or T4), and triiodothyronine (or T3), with T4 being the major hormone secreted by the thyroid follicles, and most of T3 formed at the target tissues by T4 being converted to T3. Thyroid hormone “is important in regulating tissue growth and development. It is critical for normal skeletal and nervous system development and maturation and for reproductive capabilities.”28

According to Susan Crockford, an evolutionary expert in this area:

“The distinctive skeletal morphology possessed by Neandertals is almost certainly the result of a pattern of thyroxine secretion (and, therefore, of prenatal and postnatal growth rates) that differed markedly and consistently from that of modern humans. These Neandertal traits may resemble superficially the pathological changes associated with congenital iodine deficiency because they reflect different amounts of thyroxine available for skeletal growth at particular stages of development as compared with healthy modern humans.”29

Thus, a master switch controlling a certain thyroid hormone secretion pattern might exist in people with robust skeletal morphology, such as the Neandertals—which is linked to the genetic mechanism for long lifespans. While the precise genetic mechanism for any such secretion pattern of thyroid hormones (THs) is unclear, there are clues.

According to Crockford:

“While genes at other sites may modify the ultimate rhythmic pattern of THs, output of several so-called ‘clock genes’—found in circadian oscillator cells that reside in the suprachiasmatic nuclei (SCN) of the anterior hypothalamus—are likely the origin of pulsatile production … . Even very slight individual variation in the efficiency of such genes (eight identified so far) may have dramatic repercussions for traits downstream through their affects on secretion of the pineal gland hormone melatonin.”30

Basically, pulsatile melatonin release from the pineal gland stimulates the pulsatile secretion of thyrotropin-releasing hormone (TRH) from the hypothalamus, which leads to bursts of thyroid-stimulating hormone (TSH) from the pituitary gland, and this in turn leads to the pulsatile release of THs from the thyroid glands.31

Crockford also states that:

“Changes in the rates and timing of prenatal and postnatal development in ancestral species make possible a wide variety of size and shape differences in descendant populations, a well-known evolutionary pattern called ‘heterochrony.’ It is without question the most common mode of speciation … . Although identification of the precise biological trigger that implements such developmental change has proved elusive, hormonal involvement has long been suspected.”29

While Crockford sees mutations in the “suite of genes that generates thyroid rhythm phenotypes … as providing the essential raw material, the individual variation, for natural selection to act upon during speciation”,29 such an evolutionary explanation is unnecessary.

Longevity genes

Humans living pre-Flood and early post-Flood may well have had longevity genes. These longevity genes may have also caused robusticity, or could have been linked to genes that caused robusticity.32 Or, alternatively, the robusticity could have allowed for longevity. However, the genes (or genetic mechanism) responsible for longevity, and hence robusticity, have since been lost or de-activated. Dr Carl Wieland has suggested that there may have been ‘longevity genes’ in earlier human populations that were subsequently lost via genetic drift.33 According to Wieland:

“The extinction of human lines with more robust morphology (Neanderthal, erectus) may correlate with extinction of longevity. The robusticity may be the result of genetic longevity/delayed maturation or the same populations may have had [possibly linked] genes for longevity and robusticity.”34

Changes in development

The nature of any developmental changes in skeletal morphology between robust and extant humans as a result of possible differences in the pattern of thyroid hormone secretion can only be speculated on. Anything to do with the mechanisms regulating rates and timing of development is very complex, and while it is suggested that thyroid hormone secretion is a major factor, because of its critical role in ensuring normal skeletal and nervous system development and maturation, it may not necessarily be the only factor.

The skull is probably where the most significant differences exist between robust and extant humans. Hence, clues to developmental changes that possibly occurred may be found by considering how extant human skulls develop, and what would happen if the growth rates of different parts of the skull changed. For example, the brain may contribute to differences. It is known that the human face and vault of the skull develop at different rates, and that this is largely due to the precocious or rapid development of the brain early on (the expanding brain being responsible for the form of the vault).35 Hence, if brain development was slowed down (or accelerated), then it might have significant implications on the form of the cranium. Also, the human face itself does not grow uniformly, with the upper face36 initially growing the fastest. According to craniofacial development expert Geoffrey Sperber:

“The upper third of the face initially grows the most rapidly, in keeping with its neurocranial association and the precocious development of the frontal lobes of the brain. It is also the first to achieve its ultimate growth potential, ceasing to grow significantly after 12 years of age. In contrast, the middle and lower thirds grow more slowly over a prolonged period, not ceasing growth until late adolescence … . Completion of the masticatory apparatus by eruption of the third molars (at 18 to 25 years of age) marks the cessation of growth of the lower two-thirds of the face.”37

The complicated growth pattern of the cranial base also plays an important role “in determining the final shape and size of the cranium and ultimately the morphology of the entire skull, including the occlusion of the dentition”.38 The main point in the above reference to skull growth is to highlight the interdependence of different parts of the skull (features usually do not develop in isolation), and also the prominent role that brain growth has in forming the ultimate shape of the skull. Hence, change the timing of brain growth and you will most likely change the spatial relationship between different parts of the skull, leading to differences in skull morphology.

According to Lieberman, anatomically modern humans differ from Neandertals, and other Homo taxa,

“… by only a few features. These include a globular braincase, a vertical forehead, a diminutive browridge, a canine fossa and a pronounced chin. Humans are also unique among mammals in lacking facial projection: the face of the adult H. sapiens lies almost entirely beneath the anterior cranial fossa, whereas the face in all other adult mammals, including Neanderthals, projects to some extent in front of the braincase.”39

Evolutionists have no problem incorporating changes in growth rates to explain peculiarities of alleged fossil hominids. For example, acceleration in the longitudinal growth of the cranial base early on in development of the Neandertals has been speculated to ultimately (because of a longer and flatter cranial base) “have impacted both vault and facial shape, and may have been responsible for many of the craniofacial differences between Neandertals and modern humans”.40

Changes in skull features tend not to happen as independent events, separate from all other features, but usually occur in concert with changes in other skull features.41 For example, evolutionists cite changes in the form of the braincase (e.g. increase in parietal breadth, broadening of the frontal bone behind the eye sockets and at the coronal suture, increase in height of the braincase, and expansion of the occipital plane of the occipital bone) as simply “byproducts of the expansion of the cerebral cortex relative to the brainstem, which causes the upper part of the braincase to get bigger relative to the rest of the skull”.41

Some evolutionists make similar arguments with respect to the face, suggesting “in one way or another” that some of the most significant differences in facial features (“massive, protruding supraorbital tori, their prognathic faces, and their receding, chinless mandibular symphyses”) that distinguish modern human faces from H. erectus and H. heidelbergensis (the authors cited use the terms Erectines and Heidelbergs instead)—or, more specifically, the loss of such features in H. sapiens—“reflects the changing morphology of the teeth”.41 While evolutionists look at it in terms of trends in human evolution, an evolutionary interpretation is not required, the latter being simply a byproduct of a particular worldview.

What about the small size of Homo erectus skulls?

According to evolutionists Frederick Coolidge and Thomas Wynn:

“Modern human brains vary in size from 1,100 cc to almost 2,000 cc, and there is no known correlation between brain size and intelligence, however one chooses to measure it.”42

A well-known textbook on Anatomy & Physiology essentially says the same thing, although it expands the human variation in brain size:

“No correlation exists between brain size and intelligence. Individuals with the smallest brains (750 mL) and the largest brains (2100 mL) are functionally normal.”43

Despite the above-noted enormous variability of brain size in extant humans that are functionally normal, a valid question is why cranial capacities (correlating with brain size) of crania from fossil specimens assigned to H. erectus are on average small compared to extant humans, some even below what would be regarded as the extreme range of what would be considered normal in extant humans.44

What clues does the Hobbit offer?

On Thursday, 28 October 2004, media headlines, such as: “Lost race of human ‘hobbits’ unearthed on Indonesian island”, from The Age newspaper, were splashed all over the world. An overview of the early days of the hobbit controversy has previously been published by this author.45 Officially named Homo floresiensis, but dubbed ‘hobbits’, the original position, by the authors of the Nature paper announcing the find, was that H. floresiensis was a new species derived from an isolated ancestral H. erectus population that experienced endemic dwarfing.46 Since then there have been many hypotheses put forth to explain the identity of H. floresiensis, including that it was just a variant of H. erectus,47 a new species derived from H. habilis,48 a microcephalic modern human,49 a small-brained australopithecine-like obligate biped,50 and a modern human cretin.51

In my opinion the cretinism hypothesis gives the best account of the postcranial anatomy of H. floresiensis; there being convincing arguments on how H. floresiensis is consistent with this.52,53 In the words of paleoanthropologist Dean Falk:

“Cretinism is a condition of stunted growth and mental retardation that result from a deficiency of thyroid hormone, which can occur for a variety of reasons, including a diet that lacks enough iodine. Infants of mothers deficient in iodine are likely to be born with the condition. Children with cretinism have broad faces with flat noses. If untreated, they become smaller for their age as they grow up, which results in dwarfed adults.”54

Cretinism brought about by environmental iodine deficiency is not a genetic disorder (cretins being the offspring of mothers with severe iodine deficiency),55 so it can occur anywhere there is iodine deficiency in the food chain, and as such can affect people in different parts of the world, although clinical features may vary.56,57 Iodine deficiency and cretinism has been a problem in recent historical times, and has still not been conquered.58 The suggestion is that H. floresiensis suffered from hypothyroid endemic cretinism (also called myxoedematous endemic cretinism), a condition resulting from being born without a functional thyroid gland.59,60 The environment where H. floresiensis was discovered, at Liang Bua on the Indonesian Island of Flores, was most likely iodine-deficient.

According to evolutionist Charles Oxnard:

“Today’s goitre rates imply that prior hunter-gatherer populations in the hills should have been severely iodine-deficient and would regularly have produced cretins.

“Liang Bua itself is a limestone cave and nearby soils are alkaline and probably therefore iodine-deficient. The altitude is 500 m … . The site is reasonably remote from both the north and south coasts … . Fish bones found at Liang Bua are river fish that would be deficient in iodine. River waters (a river flow nearby) in such regions are low in iodine. All these factors would have precluded access to iodine-rich seafoods.”61

Complicating matters, though, is a recent detailed analysis of the LB1 H. floresiensis cranium (figure 2), by Yousuke Kaifu et al.,62 that included comparisons with a host of crania, such as the Georgia (Dmanisi) H. erectus, early Javanese H. erectus (Trinil and Sangiran series) crania, late Javanese H. erectus (Sambungmacan and Ngandong series) crania, Chinese H. erectus, African early and late H. erectus, H. heidelbergensis) crania, such as Kabwe and Bodo, as well as H. habilis crania.63 Although they included a large sample of modern human cranial measurements in their study, they do not appear to mention any modern pathological specimens as a comparative sample, so cretinism or microcephaly cannot be ruled out. From their analysis Kaifu and co-authors concluded, similarly to what was originally proposed in 2004, that:

“LB1 is most similar to early Javanese Homo erectus from Sangiran and Trinil in these and other aspects. We conclude that the craniofacial morphology of LB1 is consistent with the hypothesis that H. floresiensis evolved from early Javanese H. erectus with dramatic island dwarfism.”64

Does the LB1 skull fit the cretinism model, in particular the small brain size? The authors of the cretinism hypothesis argue that smaller parent populations in terms of brain size (e.g. pygmoid crania) could have given rise to cretins with endocranial volumes below 500 cc (LB1 hobbit territory).65 In a 2010 publication they stated:

“Thus, normal South East Asian pygmoid crania of 800–1000 ml have been recorded (refs in [7]) and estimated [30]). On this basis, cretins from such populations could have brain sizes as small as 400–500 ml, based on scaling of height and brain size found among European endemic cretins [7].”66

What’s the most likely scenario? Postcranially, the best fit of H. floresiensis appears to be with cretinism, but the skull shares a lot of features with H. erectus. A likely scenario is that H. floresiensis was a robust-type human (e.g. H. erectus) with cretinism. To re-emphasize, specimens labeled H. erectus were descendants of Adam & Eve, and so fully human—most likely early post-Flood humans.

If the LB1 H. floresiensis cranium, most recently estimated to be 426 cc,67 belonged to a pathological robust human with cretinism, it raises interesting questions about similar pathology in other small-brained robust humans, such as the Dmanisi H. erectus specimens (figure 3)—where the cranial capacity of four crania ranges from 600 to 780 cc.68 It would not be that surprising, given that many features of cretinism mimic so-called ‘primitive’ features of evolution. According to Oxnard:

“It is remarkable that so many features similar to those normally present in great apes, in Australopithecus and Paranthropus, and in early Homo (e.g. H. erectus and even to some degree, H. neanderthalensis) but not in modern H. sapiens are generated in humans by growth deficits due to the absence of thyroid hormone. In other words, many of the pathological features of cretinism mimic the primitive characters of evolution making it easy to mistake pathological features for primitive characters. The differences can be disentangled by understanding the underlying biology of characters.”69

That the Dmanisi specimens are found in the same locality may not be that unusual. For example, Oxnard suggests that in “seasonally mobile hunter-gatherer groups”, in prior times, cretin children would:

“… be ostracised as adults by the wider community due to their abnormal features and behaviours. Unable to travel easily with a mobile community, especially unable to help build normal temporary dwellings in such a community, adult cretins might well separate and shelter in caves. If there were a reasonable number of them (say, conservatively) 5% of all births, they might indeed shelter together.”70

The above statement is of course very speculative, and alternative scenarios are possible, particularly as there is evidence that early people cared for the infirm. Maybe the cretins were cared for as a group by healthier members of the small, isolated community.

According to the analysis by Kaifu et al., the LB1 cranial shape most resembled the so-called ‘early’ Javanese H. erectus (the Trinil and Sangiran series) crania,64 not the ‘late’ Javanese H. erectus, like the Ngandong series crania—also known as Solo Man. The latter are generally larger in cranial capacity, from 1,013 cc to 1,251 cc, while the former (the Trinil and Sangiran series crania) have cranial capacities ranging from 813 cc to 1,059 cc.71 This raises the question of whether some of these small-brained, so-called early Javanese H. erectus are also pathological robust humans with cretinism, albeit less extreme than the case of the hobbit, or whether the high proportion of small brain sizes in H. erectus is just part of the normal variation for robust humans?

If cretinism is still a problem in today’s world, with modern medicine and information about iodine deficiency at our disposal, how much more of a problem could it potentially have been for early post-Flood/post-Babel human populations migrating to uncharted regions of the earth, most likely unaware of the problem (or cause of the problem)—and probably having their hands full just surviving day to day? Hence, robust human populations settling in any iodine-deficient regions of Africa, Georgia, China, Indonesia, etc. may well have had a high incidence of cretinism.

Conclusion

The ideas put forth in this paper are speculative, and, given the topic, it could hardly be otherwise. Hence, the proposed model for interpreting robust humans will likely need revision as more is learned on this topic. Hopefully, outlining the model will stimulate further discussion and research in this area, as it is surely needed.

References and notes

- Shapiro, H.L., Peking Man, Simon and Schuster, New York, p. 125, 1974. Return to text.

- Lieberman, D.E., The Evolution of the Human Head, The Belknap Press of Harvard University Press, Cambridge, MA, p. 580, 2011. Return to text.

- Klein, R.G., The Human Career; Human Biological and Cultural Origins, 3rd edn, The University of Chicago Press, Chicago, IL, p. 348, 2009. Return to text.

- Rightmire, G.P., Brain size and encephalization in Early to Mid-Pleistocene Homo, American J. Physical Anthropology, 124:112, 2004; Tattersall, I., The Fossil Trail: How We Know What We Think We Know about Human Evolution, 2nd edn, Oxford University Press, New York, pp. 281, 288, 2009. Return to text.

- Klein, ref. 3, p. 312. Return to text.

- Cartmill, M. and Smith, F.H., The Human Lineage, Wiley-Blackwell, NJ, p. 431, 2009. Return to text.

- I.e. descent from a common ancestor with extant apes. Return to text.

- Jacob, T. et al., Pygmoid Australomelanesian Homo sapiens skeletal remains from Liang Bua, Flores: Population affinities and pathological abnormalities, Proceedings of the National Academy of Sciences 103:13423, 2006. Return to text.

- Where burial is in caves, for instance, the sediments within which those caves formed often contain marine fossils from the Deluge, indicating that the fossil human was post-Flood. Return to text.

- Wieland, C., Living for 900 years, Creation 20(4):10–13, 1998; creation.com/900. Return to text.

- Development is defined as “the gradual modification of anatomical structures and physiological characteristics during the period from fertilization to maturity”. See: Martini, F.H., Ober, W.C., Nath, J.L., Bartholomew, E.F., Garrison, C.W. and Welch, K., Visual Anatomy & Physiology, Benjamin Cummings, San Francisco, CA, p. 945, 2011. Return to text.

- Martini, F.H., Nath, J.L. and Bartholomew, E.F., Fundamentals of Anatomy & Physiology, 9th edn, Pearson Benjamin Cummings, San Francisco, CA, p. 192, 2012. Return to text.

- Cuozzo, J.W., Buried Alive, Master Books, Green Forest, AR, 1998. Return to text.

- Habermehl, A., Those enigmatic Neanderthals: What are they saying? Are we listening, Answers Research J. 3:2,7,8,10, 2010. Return to text.

- Habermehl, ref. 14, p. 8. Return to text.

- Habermehl, ref. 14, pp. 10–12. Return to text.

- Maturation is the process of becoming mature or attaining full development. The term is sometimes used in a different manner, however, but the core meaning (which is applicable here) is “the development process leading toward the state of maturity”. See: Reber, A.S., The Penguin Dictionary of Psychology, Penguin Books, London, p. 422, 1985. Return to text.

- Maturity is defined as “the state of full development or completed growth”. See: Martini et al., ref. 12. Return to text.

- Habermehl, ref. 14, p. 3. Return to text.

- Cuozzo, ref. 13, p. 101. Return to text.

- Stringer, C., The Origin of Our Species, Allen Lane an imprint of Penguin Books, London, pp. 148–149, 2011. Return to text.

- Stringer, ref. 21, p. 152. Return to text.

- Tattersal, I., Masters of the Planet; the Search for Our Human Origins, Palgrave Macmillan, New York, p. 164, 2012. Return to text.

- Weaver, T.D., The meaning of Neandertal skeletal morphology, Proceedings of the National Academy of Sciences 106:16030, 2009. Return to text.

- Conroy, G.C., Reconstructing Human Origins, 2nd edn, W.W. Norton, New York, p. 524, 2005. Return to text.

- Jordan, P., Neanderthal: Neanderthal Man and the Story of Human Origins, Sutton Publishing, Phoenix Mill, p. 46, 1999. Return to text.

- For a list of hormones involved in bone growth and maintenance see: Martini et al., ref. 12, p. 185. Return to text.

- Marieb, E.N. and Hoehn, K., Human Anatomy & Physiology, 8th edn, Pearson Benjamin Cummings, San Francisco, CA, p. 609, 2010. Return to text.

- Crockford, S.J., Commentary: Thyroid hormone in Neandertal evolution: A natural or pathological role? Geographical Review 92(1):73–88, 2002. Return to text.

- Crockford, S.J., Thyroid rhythm phenotypes and hominid evolution: a new paradigm implicates pulsatile hormone secretion in speciation and adaptation changes, Comparative Biochemistry and Physiology Part A 135:109, 2003. For an illustration of how the SCN regulates melatonin release see: Wright, K., Times of our lives, Scientific American 287(3):43, 2002. Even more important may be the finding of direct nerve connections between the SCN and the thyroid gland (essentially bypassing control of TH release by pituitary TSH), as this “provides a mechanism that explains how thyroid rhythms could exert pacemaker-like control over all other hormones”. Crockford, S.J., Rhythms of Life: Thyroid Hormone and the Origin of Species, Trafford Publishing, Victoria, BC, p. 88, 2006. Return to text.

- Crockford, ref. 30, p. 107. Return to text.

- Of course, if there was linkage between longevity genes and robusticity genes, and the linkage was broken, then theoretically you could have robust humans with short lifespans. Return to text.

- Wieland, C., Decreased lifespans: have we been looking in the right place?, J. Creation (TJ) 8(2):138–141, 1994; creation.com/lifespan. Return to text.

- Wieland, ref. 33, p. 141. Return to text.

- Sperber, G.H., Craniofacial Development, BC Decker Inc., Hamilton, Ontario, p. 85, 2001. Return to text.

- Defined here as: “the upper third of the face is predominantly of neurocranial composition, with the frontal bone of the calvaria primarily responsible for the forehead”. From: Sperber, ref. 35, p. 103. Return to text.

- Sperber, ref. 35, pp. 103–104. Return to text.

- Sperber, ref. 35, p. 100. Return to text.

- Lieberman, D.E., Sphenoid shortening and the evolution of modern human cranial shape, Nature 393:158, 1998. Return to text.

- Cartmill, M. and Smith, F.H., The Human Lineage, Wiley-Blackwell, New Jersey, p. 384, 2009. Return to text.

- Cartmill and Smith, ref. 40, p. 330. Return to text.

- Coolidge, F.L. and Wynn, T., The Rise of Homo sapiens: The Evolution of Modern Thinking, Wiley-Blackwell, West Sussex, UK, p. 64, 2009. Return to text.

- Martini, ref. 12, p. 449. Return to text.

- For a list of estimated cranial capacities of Homo erectus and other human fossil specimens, as well as other alleged hominids, see: Schoenemann, P.T., Hominid brain evolution; in: Begun, D.R. (Ed.), A Companion to Paleoanthropology, Wiley-Blackwell, West Sussex, UK, pp. 142–150, 2013. Return to text.

- Line, P., The mysterious hobbit, J. Creation 20(3):17–24, 2006. Return to text.

- Brown, P., Sutikna, T., Morwood, M.J., Soejono, R.P., Jatmiko, Saptomo E.W. and Due, R.A., A new small-bodied hominin from the late Pleistocene of Flores, Indonesia, Nature 431:1055, 2004. Return to text.

- This position was put forth by Susan Anton, a Homo erectus expert. See: Morwood M. and Van Oosterzee, P., A New Human: The Startling Discovery and Strange Story of the “Hobbits” of Flores, Indonesia, Updated Paperback Edition, Left Coast Press, Walnut Creek, CA, p. 203, 2009. Return to text.

- This was the position of Colin Groves from the Australian National University (ANU) in Canberra. See: Morwood, ref. 47, pp. 203–204. Return to text.

- A microcephalic human is a pathological human with a very small head. This was the position which started the controversy, first promoted by Professor Teuku Jacob from Indonesia (now deceased), Dr Alan Thorne (also deceased; formerly ANU), and Professor Maciej Henneberg (Univ. of Adelaide). See Line, ref. 45, pp. 18–19. Return to text.

- Although originally proposing the dwarfed H. erectus idea, Peter Brown would later back away from that, suggesting instead (in 2009) “that the Liang Bua hominins arrived on Flores in the middle Pleistocene, essentially with the skeletal and dental characteristics that distinguished them until they became extinct at approximately 18 ka. Comparison with Dmanisi H. erectus suggests that the Liang Bua hominin lineage left Africa before 1.8 Ma, and possibly before the evolution of the genus Homo. We believe that these distinctive, tool making, small-brained australopithecine-like, obligate bipeds moved from the Asian mainland through the Lesser Sunda Islands to Flores, before the arrival of H. erectus and H. sapiens in the region.” From: Brown, P. and Maeda, T., Liang Bua Homo floresiensis mandibles and mandibular teeth: a contribution to the comparative morphology of a new hominin species, J. Human Evolution 57:592–593, 2009; see also Falk, D., The Fossil Chronicles: How Two Controversial Discoveries Changed our View of Human Evolution, University of California Press, Berkeley, CA, p. 184, 2011. Return to text.

- This hypothesis was first suggested in a publication in 2008, as follows: Obendorf, P.J., Oxnard, C.E. and Kefford, B.J., Are the small human-like fossils found on Flores human endemic cretins?, Proceedings of the Royal Society B 275:1287–1296, 2008. Return to text.

- Oxnard, C., Obendorf, P.J. and Kefford, B.J. Post-cranial skeletons of hypothyroid cretins show a similar anatomical mosaic as Homo floresiensis, PLoS ONE 5(9):e13018, 2010. Return to text.

- Oxnard, C., Ghostly Muscles, Wrinkled Brains, Heresies and Hobbits, World Scientific, Singapore, pp. 289–347, 2008. Return to text.

- Falk, ref. 50, p. 156. Return to text.

- Oxnard, ref. 53, pp. 303, 342. Return to text.

- Chen, Z-P., Cretinism revisited, Best Practice & Research Clinical Endocrinology & Metabolism 24:40–43, 2010. Return to text.

- Dobson, J.E., The iodine factor in health and evolution, The Geographical Review 88(1):4–5, 1998. Return to text.

- Oxnard, ref. 53, pp. 333–344. Return to text.

- Oxnard et al., ref. 52, pp. 1–2. Return to text.

- Obendorf et al., ref. 51, p. 1287. Return to text.

- Oxnard, ref. 53, pp. 335–337. Return to text.

- Kaifu, Y., Baba, H., Sutikna, T., Morwood, M.J., Kubo, D., Saptoma, E.W., Jatmiko, Awe, R.D. and Djubiantono, T., Craniofacial morphology of Homo floresiensis: Description, taxonomic affinities, and evolutionary implication, J. Human Evolution 61:644–682, 2011. Return to text.

- Kaifu et al., ref. 62, p. 653. Return to text.

- Kaifu et al., ref. 62, p. 644. Return to text.

- Oxnard et al., ref. 52, p. 7. Return to text.

- Oxnard et al., ref. 65. Note that in the quote, ref. 7 refers to: Obendorf et al., ref. 51. Note also that in the quote, ref. 30 refers to: Berger, L.R., Churchill, S.E., De Klerk, B. and Quinn, R.L., Small-Bodied Humans from Palau, Micronesia, PLoS ONE 3(3):e1780, 2008. Return to text.

- Kubo, D., Kono, R.T. and Kaifu, Y., Brain size of Homo floresiensis and its evolutionary implications, Proceedings of the Royal Society B 280:20130338, 2013; http://dx.doi.org/10.1098/rspb.2013.0338. Return to text.

- Schoenemann, ref. 44, pp. 144; Lordkipanidze, D. et al, A fourth hominin skull from Dmanisi, Georgia, The Anatomical Record Part A 288A:1150, 2006. Return to text.

- Oxnard, ref. 61, p. 342. Return to text.

- Oxnard, ref. 61, p. 339. Return to text.

- Schoenemann, ref. 44, pp. 144–145. Return to text.

Readers’ comments

Comments are automatically closed 14 days after publication.